CheckApp vs Grammarly vs ChatGPT vs Copyscape

Before you publish that client article, what checks actually ran?

A 1,500-word fintech piece. Your writer used AI for the first draft, edited it down, and handed it off for review. It looks clean. The headlines are tight. The tone matches the brand.

But did anyone check whether that statistic in the third paragraph exists? Did anyone check whether the phrase in the intro came from a competing blog? Did anyone check whether the phrase "shown to reduce cholesterol" triggers an FDA compliance flag?

Three reviewers signed off on a wellness piece before anyone noticed a sentence stating that a supplement was "clinically proven to reduce inflammation in 72 hours." That is an FDA violation. The client would have owned the exposure.

This is what the tools-for-professional-writers landscape actually looks like: four tools that most agency editors reach for, each excellent at one thing, each silent on the rest. Here's an honest account of what each one does — and what it doesn't.

The honest matrix

What each tool actually checks across the six dimensions that matter most in professional publishing:

| Dimension | Grammarly | ChatGPT | Copyscape | CheckApp |

|---|---|---|---|---|

| Grammar / spelling | Excellent | Partial | None | Yes (LanguageTool + LLM) |

| Plagiarism | None | None | Excellent | Yes (Copyscape / Originality) |

| Fact-check with real sources | None | Unreliable | None | Yes (retrieval-backed, not memory) |

| AI-generated detection | None | None | Yes (Originality tier) | Yes (same providers) |

| Brand tone matching | None | Manual only | None | Yes (upload your brief once) |

| Legal / compliance risk | None | Unreliable | None | Yes (FDA, GDPR, defamation) |

None of these tools is wrong to use. Each one is wrong to use alone as your quality gate.

Grammarly: excellent at one job

Grammarly is the best real-time grammar layer in the market. It lives inside Gmail, Google Docs, Notion, and Slack. Writers use it while writing, not after. That's the right moment for grammar — in the flow, before habits compound.

The native integrations are genuinely good. Clarity suggestions, tone detection, sentence-level rewrites — these are solid signals for any writer paying attention.

What Grammarly does not do: check whether any claim in the article is true. It does not know that "the average B2B buyer now engages with 13 pieces of content before purchasing" is a number that appears in hundreds of articles and traces back to no actual study. It does not flag the phrase "clinically proven" as a potential compliance risk. It does not know that the second paragraph echoes a competitor's blog post.

That's not a criticism. Grammarly was built to make writing cleaner, not to verify facts. It does its job well. The mistake is assuming it covers more than it does.

Who should use it: every writer, while writing. Not as a pre-publish gate.

ChatGPT as a fact-checker: a confident wrong answer is worse than no answer

Many agency editors have started using ChatGPT to verify claims — paste the sentence, ask "is this true?" The problem is structural, not just a version issue.

Large language models are trained to produce plausible-sounding text. Fact-checking is a retrieval problem: you need to find a real source, read what it actually says, and compare. LLMs are not retrieving anything. They're generating text that sounds like a response. When they don't know something, the system's default behavior is to complete the sentence anyway — not to say "I don't have a reliable source for this."

The citation-hallucination problem is well documented. Ask ChatGPT to provide a URL for a claim and you'll regularly receive URLs that do not exist, formatted convincingly with journal names, page numbers, and author attributions. For a deeper breakdown of why retrieval-before-reason changes this, the fact-check pipeline post covers the mechanism in detail.

ChatGPT is a powerful tool for brainstorming angles, drafting outlines, and generating first-pass copy. It is a liability when used as a pre-publish verification step — because a confident wrong answer, handed off to a client, is worse than no answer at all.

Who should use it: idea exploration, structure, first drafts. Not pre-publish fact verification.

Copyscape: does one thing, does it well

Copyscape has indexed over two billion pages. Its plagiarism detection across that corpus is genuinely strong. If a passage in your article appears somewhere on the public web, Copyscape will find it. The $0.03-per-check pricing is transparent and low enough for routine use.

The limit is scope. Copyscape checks for copied text. It does not check whether the facts in that text are accurate. It does not check whether the claims are legally safe. It does not check whether the article matches the brief you provided. It does not check whether the writing sounds like your client's brand.

A Copyscape pass is one layer of one type of assurance. That's fine — as long as everyone understands it's one layer.

The 3-sentence lift from a Wikipedia vitamin D entry that nearly shipped in a wellness piece? Copyscape would have caught it. The fabricated B2B statistic? Copyscape would not have flagged it — because the text wasn't copied from anywhere. It was generated to sound authoritative.

Who should use it: as one layer in a quality pipeline. Not as a standalone gate.

CheckApp: the layer that composes the others

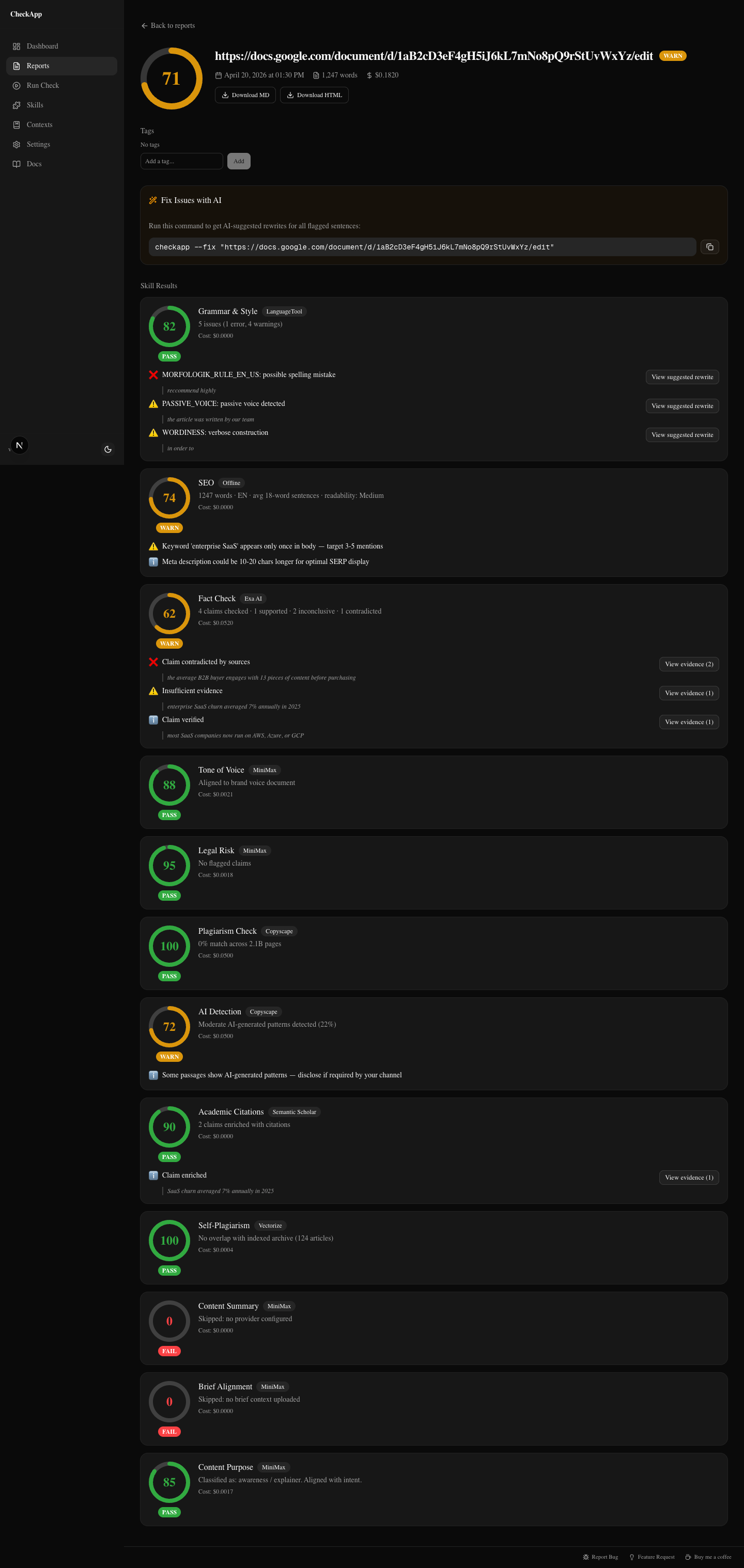

CheckApp runs 12 skills against any article before it publishes. The design premise is that plagiarism, fact-checking, grammar, tone matching, and compliance belong in a single pre-publish run — not as four separate tools across four different platforms.

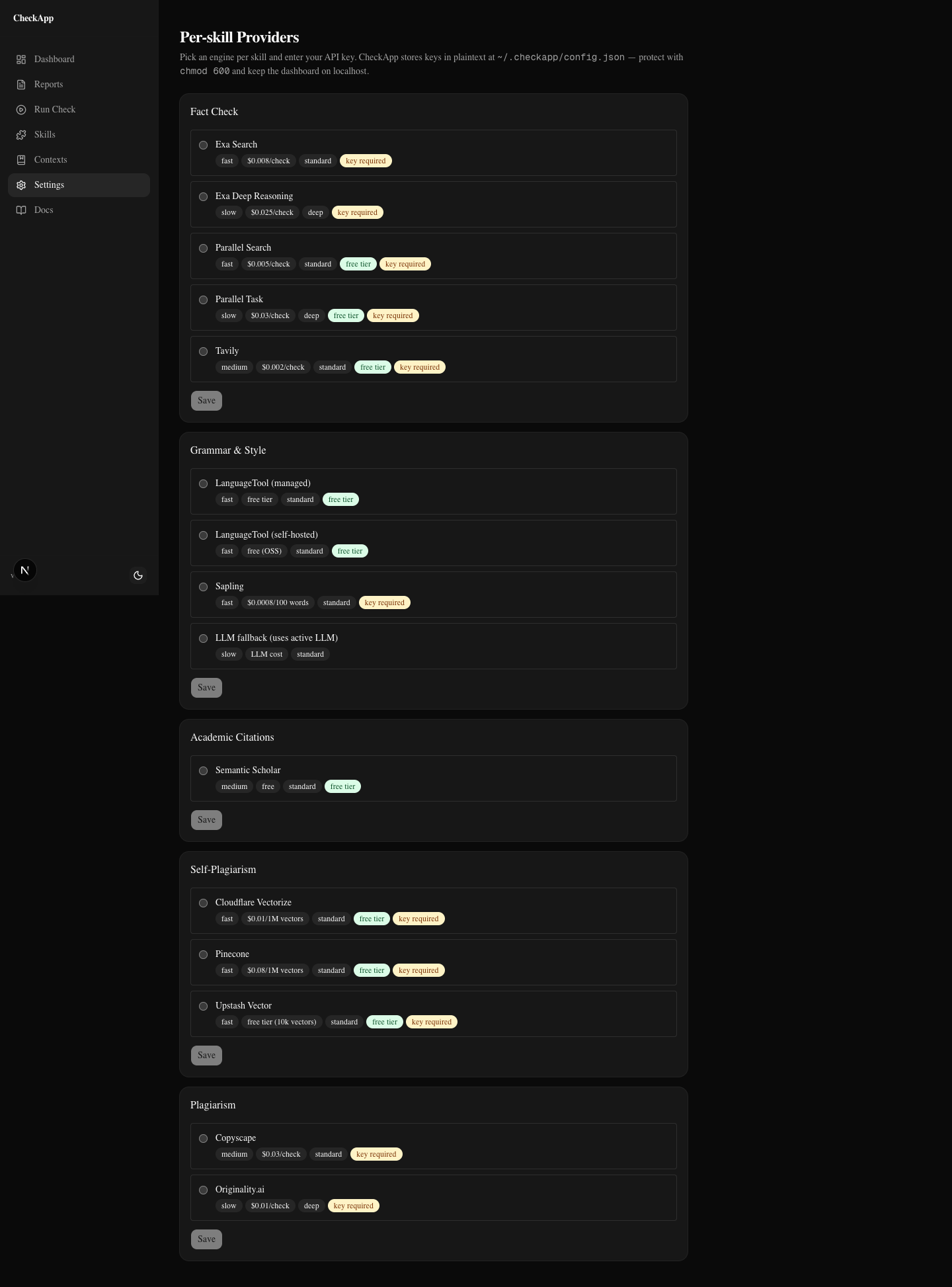

The dashboard (runs locally, opens in your browser) is where editors configure settings, upload tone guides and client briefs, run checks, and review reports. You pick providers per skill from the Settings panel: Exa Search or Exa Deep Reasoning or Parallel Task for fact-check, LanguageTool or Sapling for grammar, Copyscape or Originality for plagiarism and AI detection, Claude or MiniMax for the LLM-backed skills. Providers are swappable — if you're already paying for Copyscape, plug it in. If you want to keep costs near zero on most checks, the free tiers (LanguageTool for grammar, Semantic Scholar for academic citations, Upstash Vector for self-plagiarism) get you most of the way there.

The fact-check skill retrieves real sources. Not LLM memory. Exa Search (neural retrieval), Exa Deep Reasoning, or Parallel Task query against the live web, return URLs with relevance scores, and pass those to the LLM for comparison against the claim. For medical, scientific, or financial claims, academic citations auto-merge via Semantic Scholar at no additional cost. That's the difference between a confident wrong answer and a cited verdict with an evidence trail.

The legal risk skill scans for FDA health claims, GDPR data handling, defamation risk, and false promises. One skill call. The tone of voice skill checks the article against a brand doc you upload once per client — it doesn't just rate formality, it flags phrases that don't match the voice brief.

BYOK means the API calls go from your machine directly to your configured providers. CheckApp takes no margin on API costs. The dashboard shows a cost estimate before you run. For a typical 800-word article with free-tier providers, the cost approaches zero. With Exa Deep Reasoning for fact-check and Copyscape for plagiarism, it's closer to $0.25. You set that configuration once and know your cost per article.

CheckApp is not a content generator. It does not write articles. It is not a replacement for a skilled editor — it finds problems and returns evidence, and you decide what to fix. It's also not tuned for every language: English and Hebrew are calibrated; other scripts are detected but not optimized.

What it is: the pre-publish gate for professional work where "wrong" has consequences.

Also available as a CLI for automation in larger content pipelines.

When to use what

A short decision guide for agency editors:

| Situation | Right tool |

|---|---|

| Writing in Gmail, Docs, or Notion | Grammarly — real-time grammar in the flow |

| Exploring angles, drafting outlines, brainstorming | ChatGPT — idea generation, not verification |

| One-off plagiarism check on a web-facing piece | Copyscape — 2B+ page index, $0.03/check |

| Pre-publish on work where errors have consequences | CheckApp — 12 skills, sourced findings, legal scan |

The four tools aren't in competition. Grammarly makes writing better while it's being written. ChatGPT accelerates the ideation and drafting phase. Copyscape is a fast, well-priced plagiarism layer. CheckApp is the gate that runs once, after writing, before the article leaves your hands.

Agencies that run all four spend less than $0.40 per article on the combined pipeline. That's a cheap way to not hand a client an FDA violation.

Run it on your next piece

CheckApp is open source, MIT-licensed, and available today. No waitlist, no signup, no locked-in subscription.

Install it from GitHub, open the dashboard, upload your first article. The Setup screen walks you through adding one provider — start with LanguageTool (free) for grammar and Semantic Scholar (free, no key) for academic citations. Add Exa Search for fact-check when you're ready to pay a few cents per article. Run your first check for under five cents.

If something breaks, open an issue. That's how it gets sharper.

Was this useful?

Share it with someone who ships AI content.

Continue reading

I Ran 5 Client Articles Through CheckApp — Here's What It Caught

Five real client articles — fintech, wellness, SaaS, B2B, onboarding. A contradicted statistic, FDA-risk phrases, a plagiarism near-miss, and self-plagiarism the writer didn't know about.

Add a Quality Gate to Your Agency Workflow

How content agencies can slot a systematic pre-publish check between writer and editor — and what it actually catches before the client sees it.

Try CheckApp

Open source. MIT. ~$0.15/check (estimate). Install in 60 seconds.